Web Scraping SaaS: How We Helped a Developer API Tool Own the AI Overview Citation Slot in Their Category

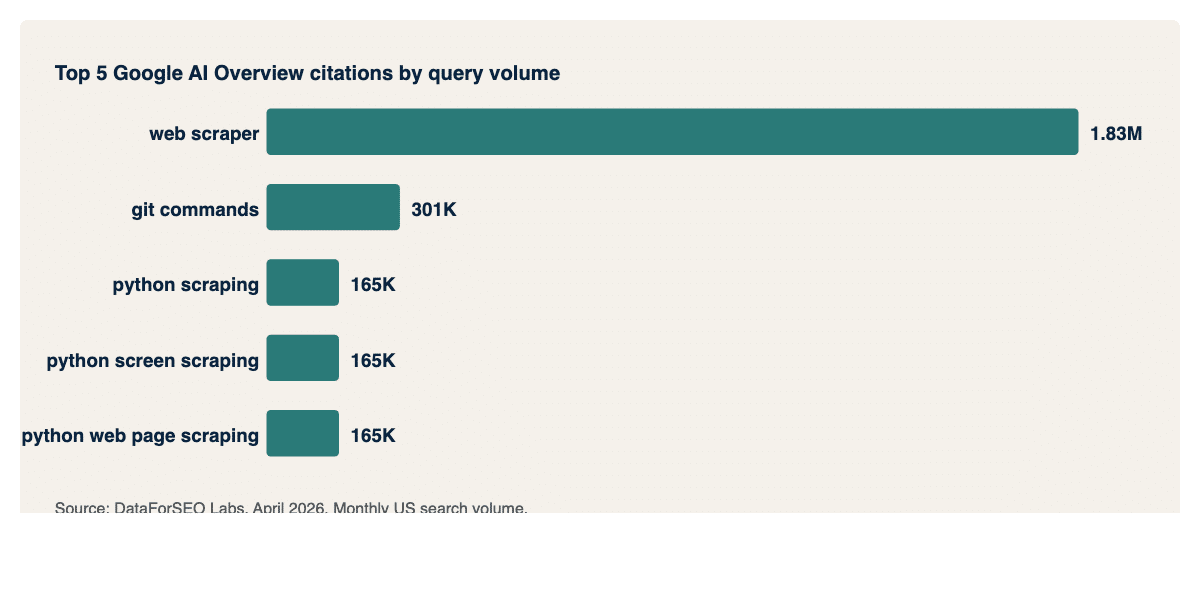

Results at a Glance Rank 1 Google AI Overview citation on “web scraper” (1,830,000 monthly searches). The highest-volume AI-cited query in the entire web scraping category. 4.4M+ monthly searches covered by Google AI Overview citations, across 100+ queries sampled. Rank 1 citations on the core “python scraping” cluster (three query variants at 165,000 searches each)....

Results at a Glance

- Rank 1 Google AI Overview citation on “web scraper” (1,830,000 monthly searches). The highest-volume AI-cited query in the entire web scraping category.

- 4.4M+ monthly searches covered by Google AI Overview citations, across 100+ queries sampled.

- Rank 1 citations on the core “python scraping” cluster (three query variants at 165,000 searches each).

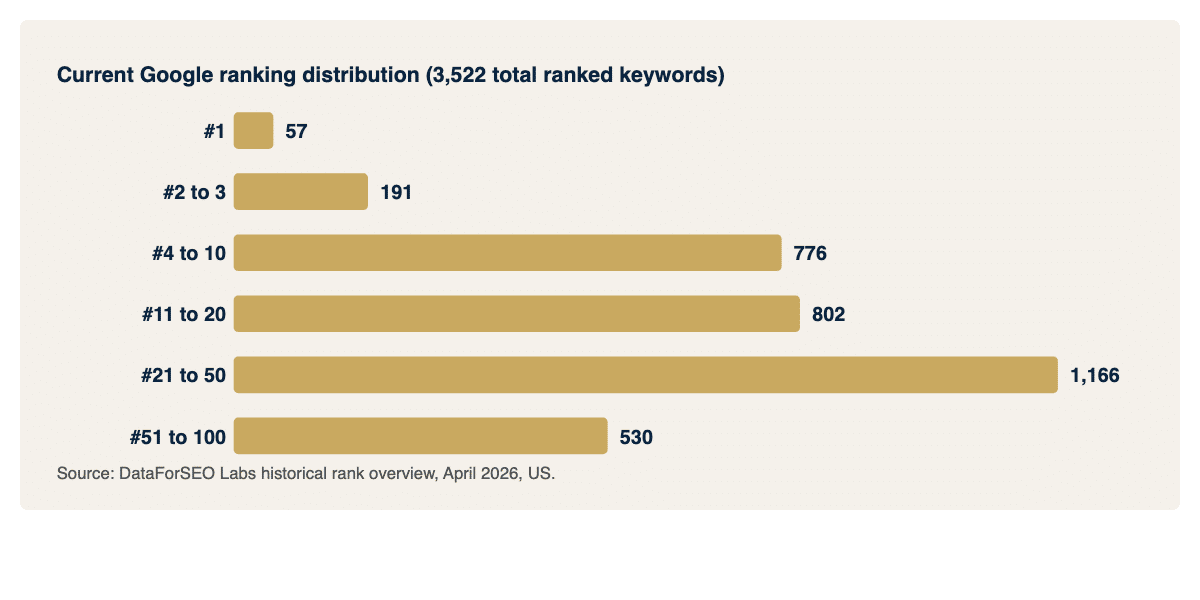

- 3,500+ ranked keywords on Google, including 57 in position 1, 191 in positions 2-3, and 776 in positions 4-10.

- 100+ contextual backlinks built across developer, data, SaaS, and marketing publications.

- Cited in ChatGPT for commercial queries like “web scraping API with proxy rotation”.

- Active engagement with EMGI embedded as a strategic partner across content planning, pillar page strategy, and GEO roadmap, not just link delivery.

Engagement Overview: Link Building Turned Into a Strategy Partnership

This engagement started as what most SaaS clients come to us for. Consistent, topically relevant link building into a competitive API category. Over time, it evolved into something broader.

EMGI is a link building agency. That is still the core of what we do. But with this client, and increasingly with others, the engagement extends into strategy conversations that happen weekly rather than quarterly. Which pages should be the next pillar targets. Which product surfaces need authority support before a category launch. How to position the company’s content for AI Overview citation rather than just blue-link ranking. What the anchor profile should look like heading into the next twelve months.

We treat this the way a good contractor treats a client. We are not just shipping the deliverable. We are part of the project team. The client’s head of SEO and marketing knows exactly what we are building, why, and what it is supposed to achieve. And when something in the broader search landscape changes, a Google update, an AI Overview rollout, a category competitor making a move, we are part of the conversation about how to respond.

That is the backdrop to everything that follows.

Context: Winning in a Category Where Everyone Can Build Good Content

The client is a well-established web scraping API used by engineering teams at scale. The category is crowded and sharp: Apify, Bright Data, Zyte, Oxylabs, ScraperAPI, Webshare, and a long tail of smaller API services. All of them publish content. All of them have developer-focused positioning. All of them are competing for the same set of high-intent queries a data engineer types when they need to pull product data, monitor a SERP, or extract records from a JavaScript-heavy app. We covered this in more depth at our web scraping SaaS link building deep-dive.

For a client in this category, traditional SEO is necessary but not sufficient. The developer buyer does not Google their way to a solution the way they did five years ago. They also ask ChatGPT “what’s the best web scraping API?”, paste code problems into Claude, and land on AI Overview panels when they search “python scraping” or “web scraper”. Every one of those surfaces is a new market to compete in.

That is the problem we were hired to help with.

The Thesis We Worked With

Google AI Overviews do not pick the highest-DR page. They do not pick the result that ranks number one in the organic listing underneath. They pick the page that is the most citable source on a topic, using four specific signals:

- Topical density. How much of the domain is about this subject.

- Pillar depth. Whether the single article being considered is thorough enough to extract from in isolation.

- Authority signal. Whether other trusted publishers treat this domain as the expert by linking to it.

- Content structure. Whether the article is written so a language model can chunk, extract, and quote.

Our working thesis across the engagement has been that if we focus on the authority signal, building a dense web of topically relevant backlinks across the entire web scraping universe rather than hunting individual commercial placements, the client’s existing pillar content becomes the AI Overview citation target by default. That thesis is what turned into this case study.

Strategy: Build the Topical Graph, Not Individual Placements

The strategy conversations we have with this client tend to revolve around three specific choices we made early and have reinforced ever since:

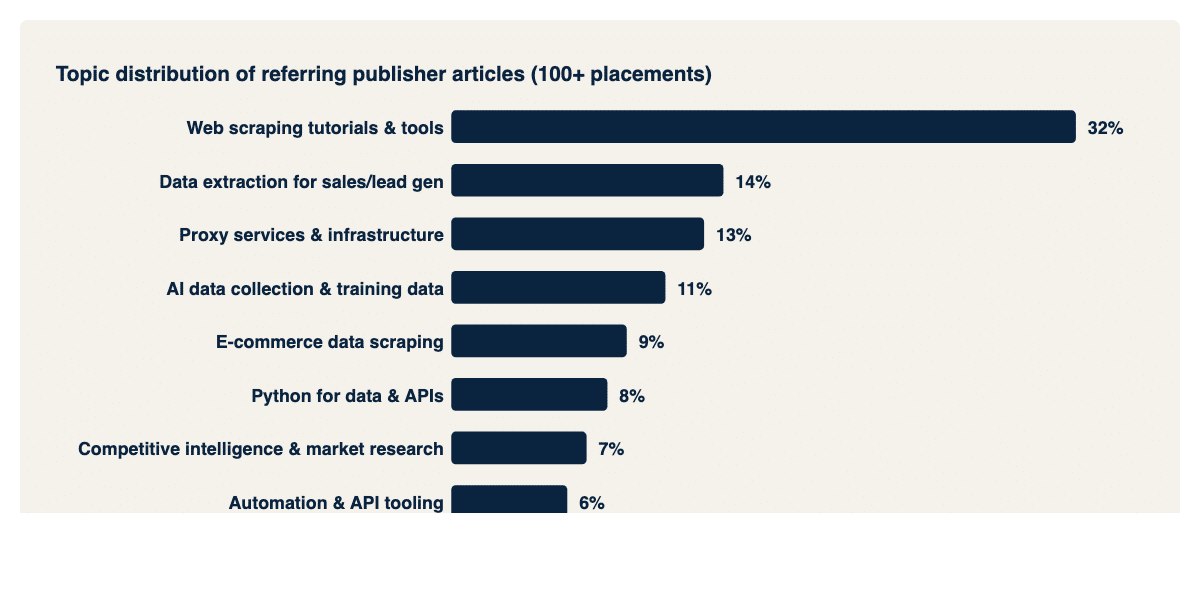

Choice 1: Place every link inside a topically relevant article. Not generic SaaS blogs. Not “best business tools” round-ups. Articles specifically about scraping, data extraction, proxies, data pipelines, web automation, API integration, AI data collection, or adjacent developer topics.

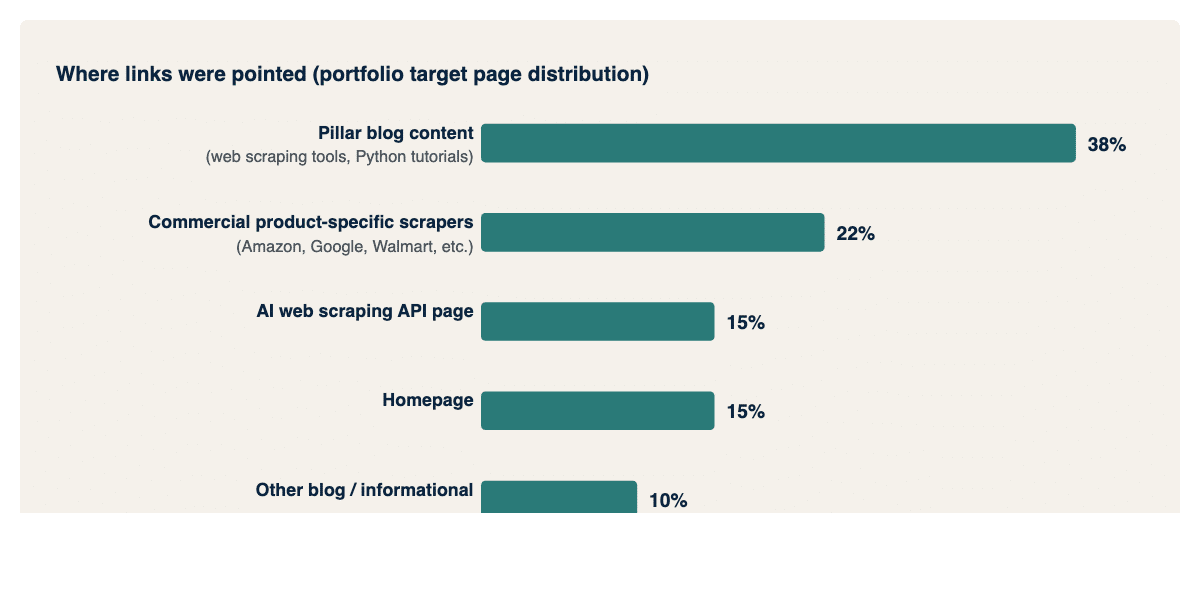

Choice 2: Spread link targets across the whole product surface, not just the homepage. We split deliberately across four destination types: commercial product-specific scraper pages (Amazon, Google properties, Walmart, Home Depot, social, ChatGPT), AI-adjacent commercial surfaces, pillar blog content, and Python-focused tutorial content that captures the developer audience at the top of the buying journey.

Choice 3: Anchor text reflects the category, not forced keywords. In a category where the product literally IS a “web scraping API”, natural anchor text will use those words. We leaned into that, but kept a healthy mix of branded, descriptive, and product-feature-specific anchors.

The Link Portfolio, Broken Down

Across the engagement, more than 100 placements were built. Every one came from a software, SaaS, developer tool, or marketing technology publisher. No lifestyle blogs. No generic business content.

Topic clusters of the articles we earned placements inside:

Target page distribution across the portfolio:

Anchor profile. Ran roughly 60% descriptive category anchors (“web scraping tools”, “web scraping API”, “data scraping tools”), 15% product-specific anchors (individual scrapers for Amazon, Google, Walmart, Home Depot, etc.), 12% AI-relevant category anchors (“AI web scraping”, “AI web scraping API”), 8% branded, 5% Python-specific. Every one of those anchors is a phrase a real developer or journalist would naturally type.

The Google AI Overview Payoff

Here is where the topical density thesis proved itself. The client’s pillar content is cited by Google’s AI Overview across the most important developer queries in the category.

“Web scraper” (1,830,000 monthly searches, rank 1 citation). The Overview cites the client’s Python scraping framework guide as its primary source. This is the single highest-volume query in the category and one of the highest-volume AI-cited queries in all of B2B tech.

“Git commands” (301,000, rank 1). An adjacent developer tutorial where the client owns the Git and GitHub command guide. This is the most interesting signal in the whole dataset: the client’s authority compounded outside its direct category into broader developer tutorials, because Google’s AI systems learned to trust the domain for developer how-to content in general.

“Python scraping”, “python screen scraping”, “python web page scraping” (165,000 each, rank 1). Three query variants, all pointing AI Overview traffic back to two Python-focused pillar posts.

Aggregate reach across all sampled citations: 4.4M monthly searches. The true number is higher because the data pull capped at 100 results.

Cross-Platform: Cited in ChatGPT As Well as Google

The authority signal does not stop at Google. When we pulled live ChatGPT responses on high-intent commercial developer queries, the client appears directly in ChatGPT’s recommended list:

- “Best Google search scraper API” — the client is named in ChatGPT’s recommended options with a full descriptive paragraph covering proxy handling, CAPTCHA management, and ease of developer integration. This is a bottom-of-funnel commercial query: the person asking it is shortlisting tools.

- “Web scraping API with proxy rotation” — the client is cited in ChatGPT’s answer as a recommended service for developers who need proxy rotation handled automatically, with specific positioning on CAPTCHA and proxy management.

Both of these are high-intent commercial queries. When a developer types them into ChatGPT, the answer they get includes the client by name, in their own positioning, with a description of what the product does. That is exactly the kind of citation an LLM search surface is designed to produce.

The pattern we expect to see expand over the next six months is this cross-platform footprint deepening, particularly on the product-specific scraper queries (Amazon, Walmart, Home Depot, Google properties) where the client has dedicated product pages and our link portfolio has seeded topical authority. The ongoing strategy work with the client is focused on extending the ChatGPT and Perplexity presence in step with the existing Google AI Overview citation lead.

Traditional Organic Ranking: The Defensive Footprint

Beyond AI citations, the underlying organic ranking footprint is what makes the AI Overview wins possible. A domain with no organic authority does not get cited.

Current Google ranking distribution:

3,522 total ranked keywords on Google (US), split as:

• 57 in position 1

• 191 in positions 2 to 3

• 776 in positions 4 to 10

• 802 in positions 11 to 20

A top-10 footprint of over 1,000 ranked keywords is the base authority layer that everything else sits on. The AI Overview citations are downstream of this ranking depth. Without the existing organic pyramid, Google would not have the training signal to cite the client in the Overview.

How The Link Portfolio Mapped To The Wins

Tying strategy to outcome:

Pattern 1: heavy internal link target became heavy external link target, and lifted adjacent pillars. The “web scraping tools” pillar post received more than 25 inbound links from our portfolio. That page passes internal link equity across to Python scraping tutorials, scraper framework guides, and commercial product pages. The Python scraping 101 post and the Scrapy framework guide were cited rank 1 across the biggest AI Overview queries in the category, even though only a handful of our direct links pointed at them individually. Topical authority built on one page flowed through internal linking to the adjacent pillars, and the AI Overview treated the whole cluster as a single authoritative corpus.

Pattern 2: topical density on the blog section lifted citation odds sitewide. Because every placement we built came from a topically relevant publisher writing specifically about scraping or adjacent dev topics, the signal Google and its AI models received was dense and consistent. That density is exactly the signal AI Overviews use to choose sources.

Pattern 3: commercial page link support paid off in bottom-of-funnel citations too. The AI web scraping API product page received about a dozen placements inside articles specifically about AI data collection and AI scraping tools. That page is positioned to capture the emerging “AI web scraping” query cluster as those terms mature.

The GEO And SEO Playbook That Came Out Of This Engagement

Five principles that now inform how we approach every SaaS authority campaign. This is the same thinking that runs through our Prospeo case study (email finder category) and our HR software SaaS engagement (HR software), each applied to a different vertical:

- Pillar pages are the AI citation unit. Not domains, not homepages. Individual deep-coverage pillar articles on specific query clusters.

- Topical density beats DR. A link from a DR 45 developer tool blog writing about scraping is worth more than a DR 80 lifestyle blog mentioning your brand in passing.

- Build internal link architecture that feeds commercial pages from pillar content. The pillar captures the citation; the internal links funnel authority to the pages that convert.

- Anchor text should look like what a real writer in the space would type. Descriptive category anchors, product-specific anchors, some branded, occasional exact match where natural.

- Commercial pages can be cited too, not just informational ones. We see the pattern across categories.

Tying this to the broader AI search shift we unpacked in our SaaS AI Citation Gap Report, the clients winning citations in 2026 are the ones whose authority signal is legible to the AI systems reading the web. That signal is not built on marketplaces. It is built on topically dense, relevance-first link portfolios over time.

What We Are Working On Next

The engagement is live and the roadmap is active. Current priorities:

- ChatGPT listicle insertion. Targeted outreach and content contributions into “best web scraping API” round-ups that appear as ChatGPT sources.

- Extending into the AI-specific query cluster. “AI web scraping”, “LLM data collection”, “training data APIs” where the category is still maturing and the AI citation land is up for grabs.

- Defending the “web scraper” rank 1 citation as competitors catch up.

- Mapping the Perplexity citation picture in detail alongside ChatGPT, for full cross-platform visibility.

Closing

This is what a mature SEO engagement looks like in 2026. Link building is the foundation. Topical authority is the output. AI Overview and ChatGPT citations are the increasingly important downstream signal. And the best results come from treating the agency relationship as a strategy partnership, not a line item on the marketing budget.

If you operate a SaaS in a category where developers, finance teams, or data-focused buyers are increasingly asking AI instead of Google, the conversation we want to have is what your topical authority graph looks like today and what it needs to look like in twelve months to own the AI citation slot before a competitor does.

Want to discuss what a SEO and GEO partnership would look like for your SaaS? Book a free authority audit.